Joon Sung Park and his team built one of the most convincing experiments on episodic memory in LLM agents in 2023 — and never once cited Endel Tulving. Yet their architecture is the most precise implementation of his memory taxonomy ever built.

Not just uncited: if you search the paper (Park et al., 2023) for "Tulving", "episodic memory", "autonoetic" or "mental time travel", you get zero hits. The only classical cognitive psychologist in the reference list is John R. Anderson with Rules of the Mind — ACT-R, a different tradition. Tulving's vocabulary doesn't appear in the paper. His architecture does.

A quick note on the setup, in case you don't know the paper: Park et al. put 25 AI agents into a simulated small town modeled on The Sims. The agents wake up, eat breakfast, go to work, meet in the afternoon, exchange information, plan evening activities. Each agent has its own background description (name, occupation, relationships) and a memory architecture that grows during the simulation. This architecture is described in the learning document of the Tulving series as "the most elaborate implementation of episodic memory in an AI system to date" — hence this deep dive.

In Part 2 I introduced Tulving's episodic-semantic memory model as an explanatory framework for agent architectures. What was theory there becomes evidence here: Park et al. rebuilt exactly that model without knowing it. This article walks through the correspondence component by component, brings the empirical proof — and marks, at the end, the one Tulving feature that no architecture can simulate.

The plan: three components in detail (Memory Stream, Reflection, Planning), the three-dimensional retrieval mechanism, the ablation study with its emergent social behavior, the autonoetic boundary, an outlook on collective memory as an open question.

Memory Stream = Episodic Memory

What Park introduces as Memory Stream is, substantively, episodic memory in Tulving's definition — only that neither Park nor the paper text uses this name.

Every Memory Stream entry consists of exactly four fields:

| Field | Content |

|---|---|

| Natural-language description | What happened, from the agent's perspective |

| Creation timestamp | When the event was observed |

| Last access timestamp | When last retrieved |

| Importance Score | How significant is this event |

Picture a chronological list of such entries that grows with every action, every dialogue, every observation. That's the complete Memory Stream — an autobiographical log in natural language. With 25 agents and one simulated day, Memory Streams grow into hundreds of entries per agent: from "makes coffee in the kitchen" to "talked with Maria about her party". The Importance Score isn't set statically — it's assigned by the LLM itself when the entry is created: a trivial everyday entry gets low values, an emotionally or motivationally significant event (a date, an argument, a new acquaintance) gets higher ones.

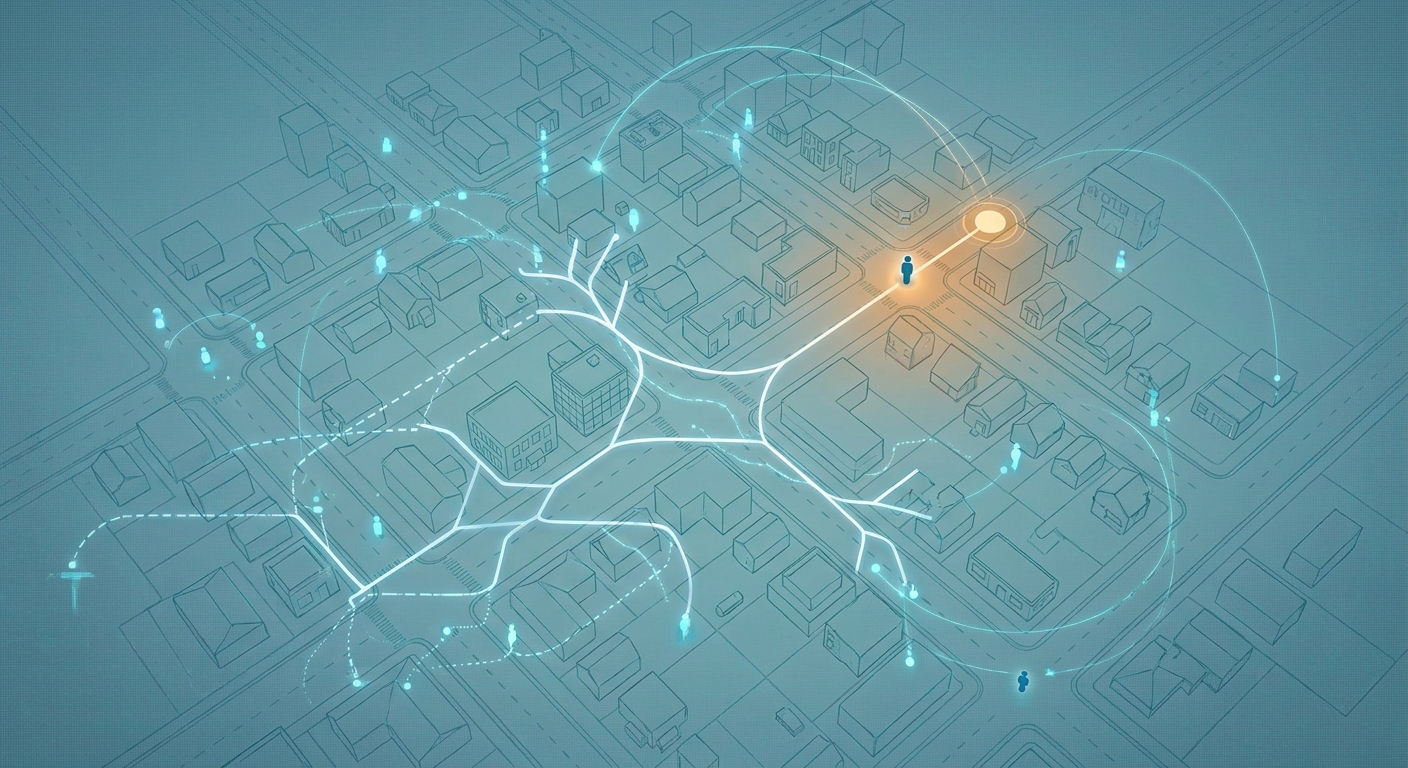

Now the Tulving mapping. Episodic memory is defined in Tulving's taxonomy by four core features. Park implements each of them without naming it:

| Tulving feature | Memory Stream implementation |

|---|---|

| Temporal dating | Creation timestamp |

| Autobiographical reference | Agent-specific perspective |

| Experiential reference | Natural-language description from the agent's viewpoint |

| Sequential organization | Chronological list structure |

An example entry might read: "Abe enters the café. He sees Maria sitting at a window table and waves to her." Timestamp 2023-02-13T14:33, last retrieved during the most recent conversation about Maria, Importance Score 3 (everyday, no particular significance). Another entry, hours later, might read: "Maria told me she's moving next week." Same Abe, same Memory Stream, but this entry gets Importance 8 — it will be preferentially weighted in later retrievals because it becomes central to the relationship with Maria and to Abe's future planning.

Four fields, four features — the correspondence is structural, not rhetorical. What is an engineering data structure in the paper is a memory type with its own name in Tulving's framework.

But episodes alone aren't enough. Tulving describes how action-relevant knowledge emerges from repeated experiences — and Park has his own mechanism for that.

Reflection = Episodic-to-Semantic Consolidation

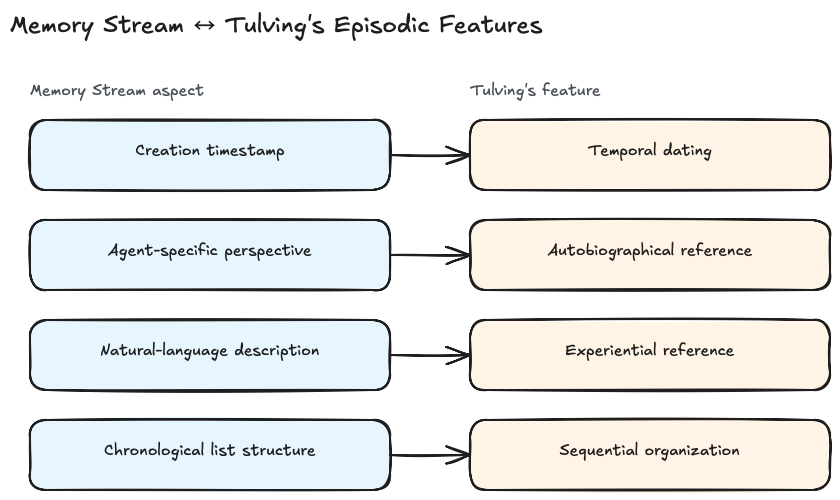

Reflection is, in Tulving's language, episodic-to-semantic consolidation — Park shows the process in four stages, Tulving in a single sentence.

The mechanism runs periodically and in stages. Stage one is the trigger: as soon as the cumulative Importance Scores of recent memories cross a threshold, a reflection round begins. This isn't a random clock but a significance-threshold gate — the agent reflects when enough has happened, not on a schedule.

Stage two is the synthesis. The LLM receives a selection of recent episodic memories as input and is asked to formulate more abstract statements about them. From a series of specific interactions emerges, for example, the reflection "I am now interested in learning to ski" or "Klaus has been working on painting recently". These are no longer individual events but patterns — decontextualized, generalized, action-relevant.

Stage three: These reflections are written back into the Memory Stream, but with a different entry-type marker. They are now episodic entries about episodic entries — a first abstraction step.

Stage four closes the loop. On later retrieval operations, reflections can be retrieved just like primary memories — and they can serve as input in future reflection cycles. Reflection of the second order, third order, ad infinitum. A reflection like "Klaus has been working on painting recently" can itself become input for a higher-order reflection such as "Klaus is going through a creative phase and is seeking conversations about art" — that's no longer a single observation but interpretive knowledge about a person.

What this means in cognitive-psychological terms is stated briefly but precisely: this process reflects the psychological observation that episodic experiences are consolidated into semantic knowledge over time — and that this semantic knowledge in turn influences the interpretation of new experiences. That is the feedback loop between Tulving's episodic and semantic systems, written in code, without the code ever asking about that model.

What makes Reflection different from plain logging is the direction of abstraction. A logging system records what happened. Reflection decides what of it is significant — and forms from that statements which go beyond the individual case. In memory-psychology terminology, that's the step where episodic material turns into semantic material. In LLM-engineering terminology, it's an abstraction pipeline. Both descriptions apply to the same mechanism.

Four stages, one Tulving pattern — consolidation has been documented for decades. Park doesn't need to open a psychology reference for this mechanism, because the pattern arises from the task itself: anyone who wants to extract action-relevant knowledge from individual experiences inevitably arrives at this process.

And if Reflection is retrospection — the look at what has been — then its counterpart is prospection: the look at what may come. That's where Park's third component enters.

Planning = Prospection and Mental Time Travel

Planning is, in Tulving's vocabulary, prospective mental time travel — forming future drafts from memories.

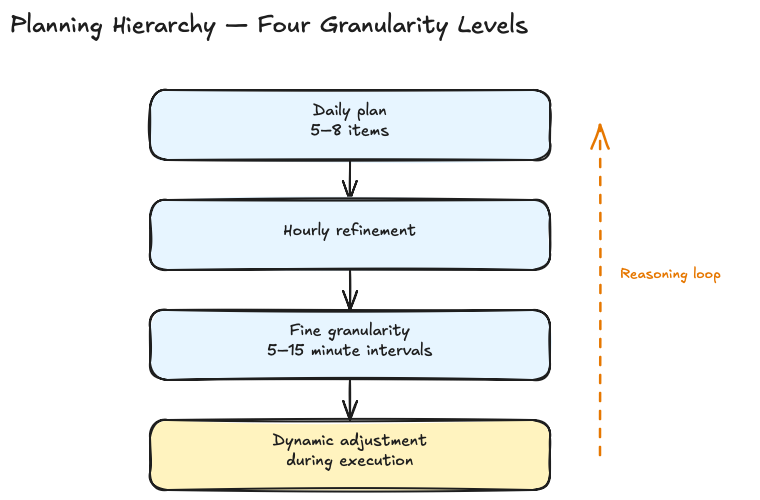

Park decomposes this process into four granularity levels:

- Daily plan. In the morning, the agent generates a coarse plan for the day from its base description and a summary of the previous day — typically five to eight items.

- Hourly refinement. Each coarse item is detailed at the hour level.

- Fine granularity. Individual hours are broken down into intervals of five to fifteen minutes.

- Dynamic adjustment. During execution, the agent receives a stream of observations, can reason about them, and keep or revise the plan.

That's a classical Hierarchical Task Network, technically nothing new. The dynamic adjustment at level four is where the system moves from script execution to reactive agency: if Abe had planned to work in his studio until 5 p.m. but unexpectedly meets Maria at 3 p.m., his Planning can rewrite the afternoon — a shared coffee, an altered evening plan, a new reflection at the end of the day. The plan is not a fixed schedule but a dynamically rewritten field of intentions.

But the interesting point is elsewhere — at a place Park treats as an aside but which carries the architectural punchline: the Reflection and the Planning components use the same large language model.

Using the same LLM for both reflection (retrospection) and planning (prospection) is the AI parallel to the neuroscientific finding of overlapping neural substrates for remembering and future thinking. Why is that a finding? Because cognitive neuroscience has shown, since the 2000s, that the same brain regions — in particular the hippocampus and prefrontal networks — are active both during episodic remembering and during the mental construction of future scenarios. Tulving described this early on as mental time travel: looking back and looking forward are two uses of the same neural machinery, not two separate systems.

Four levels, one Tulving principle — and the architectural choice to use the same LLM for hindsight and foresight isn't thrift, it's a structure-generated parallel to a neuroscientific reality.

Retrieval Scoring: Three Dimensions, Three Principles

Three components are in place — Memory Stream stores, Reflection abstracts, Planning projects. Now the question: how do you pull the right slice out at any given moment?

An LLM's context window is limited. For an agent that has accumulated hundreds or thousands of episodic entries, the most relevant ones have to be selected. Park picks a weighted sum over three dimensions:

Score = α_recency · recency + α_importance · importance + α_relevance · relevance

In the implementation, all α values are set to 1, and the partial scores are brought into the range [0, 1] via min-max normalization. That the three dimensions are equally weighted isn't a psychological claim but deliberate simplicity — Park shows that the convergence with the psychological findings lives at the structural level, not in finely tuned weightings. The three dimensions in detail:

| Dimension | Computation | Psychological motivation |

|---|---|---|

| Recency | Exponential decay based on the last access time | More recent memories are more easily retrieved (Recency Effect) |

| Importance | LLM-generated integer score for the event's significance | Emotionally or motivationally significant memories are preferentially encoded and retrieved |

| Relevance | Cosine similarity between memory embedding and query embedding | Contextual match — computational instantiation of the Encoding Specificity Principle |

The memories with the highest total scores that fit into the context window are included in the prompt.

What's happening here is the same story for the third time in the article: an engineering solution follows, without the authors ever saying so, an established psychological finding. The Recency Effect has been measured in memory research since the 1960s and is part of the basic repertoire of any introduction to cognitive psychology. Emotional salience as an encoding modulator has been standard for decades — emotionally charged events are preferentially encoded, retained longer, and retrieved more easily. The Encoding Specificity Principle — retrieval works when the retrieval context resembles the encoding context — is Tulving & Thomson 1973, one of the most cited papers in memory research. Each of the three retrieval dimensions has a published counterpart in memory psychology.

Park marks none of them explicitly. They simply stand there, as if it were self-evident that retrieval must work this way.

Emergent Social Behavior and the Ablation Proof

Is that enough to produce believable behavior? Park's own answer is noteworthy: out of the combination of Memory Stream, Reflection, and Planning, emergent social behavior arose that the developers had not explicitly programmed.

Three phenomena in concrete:

Information spreading through social networks. In an often-cited experiment, a single user gives one agent the prompt to plan a Valentine's Day party. Over two simulated days, the information spreads through the simulated small town: invitations are issued, agents ask each other for company, some arrange dates. No one coded the spreading behavior. It arises from agents who remember in their Memory Stream that they heard about the party, formulate in their Reflection that they want to attend, and take up corresponding steps in their Planning.

Activity coordination. Agents don't just meet at isolated points — they synchronize shared activities across several steps. That presupposes each agent records the plans and commitments of others in its own Memory Stream and takes them into account in later planning rounds.

Relationship maintenance over time. Across several simulated days, agents develop more stable patterns toward specific others: more frequent visits, shifted conversational shares, targeted seeking out. Relationship is thus not an attribute but the emergent consequence of repeated episodic entries, which Reflection condenses into relationship-semantics.

That alone is not yet proof that all three components are necessary. The proof comes from the ablation study: each of the three components — Observation, Planning, and Reflection — was critical for the believability of agent behavior. Removing individual components led to statistically significant drops in perceived authenticity.

Translated: without Observation (the recording side of the Memory Stream), the agents lose their episodic foundation. Without Reflection, they stay stuck in the immediate — they have episodes but no patterns. Without Planning, the prospective dimension is gone. No component is a sham component; all of them carry weight.

That's the experimental side of the convergence thesis. If Park's architecture were only superficially Tulving-compatible, ablations should produce softer findings somewhere — a redundant component, an element that could be trimmed without loss of authenticity. That doesn't happen. Each of the three components makes an independent contribution, just as Tulving's three memory types each have an independent functional status. An architectural facade without Tulving content would have collapsed under the ablation. It doesn't collapse.

The Autonoetic Boundary

This is where the story could end — Tulving reconstructed, emergence proven. But one Tulving feature is deliberately bypassed because it doesn't fit the architecture.

Tulving distinguishes episodic memory from semantic memory not only by content (specific events vs. abstracted knowledge) but by an additional dimension: subjective re-experience. Autonoetic consciousness is his name for it — the characteristic "I was there" feeling during remembering, the presentness of one's own past. This is not just information-about-oneself, but lived temporality. (If you haven't read that yet: Part 2 has the more detailed section on autonoetic consciousness.)

That is exactly what is missing in the Generative Agents architecture. What's missing is autonoetic consciousness: the Memory Stream simulates the structure of episodic remembering, but not the subjective re-experience. The agent "knows" what it has experienced, but it does not "experience it again".

This isn't an engineering gap that a version 2 will close. It's a categorical observation: computation can simulate structure — the fields, the stages, the hierarchies — but subjective experience is, by definition, not capturable as structure. Any simulation of experiencing is a simulation of structure, not of experience. That's no flaw of the Park architecture but the boundary of the analogy between conscious processes and computable models.

That makes the convergence thesis, in the end, not weaker but sharper. Park et al. reconstructed Tulving's memory architecture without citing Tulving. They established the structural correspondence in every component. They showed in the ablation that this structural correspondence is functionally necessary. They reconstructed everything — except the one feature that Tulving himself identified as the boundary between computable model and lived remembering.

Collective Memory as an Open Question

And the autonoetic boundary isn't the only open question.

Tulving's framework and the Generative Agents architecture both describe individual memory. As soon as multiple agents interact, a space opens up that neither psychology nor engineering practice has opened theoretically so far: how do multi-agent systems remember as a group? The established multi-agent state patterns — Shared State, Message Passing, Blackboard, Event-Driven — are synchronization architectures, not memory models. They solve the problem of how agents communicate, not how they remember together.

This article was originally published on Medium.